EMAIL SUPPORT

dclessons@dclessons.comLOCATION

USDocker Networking Overview

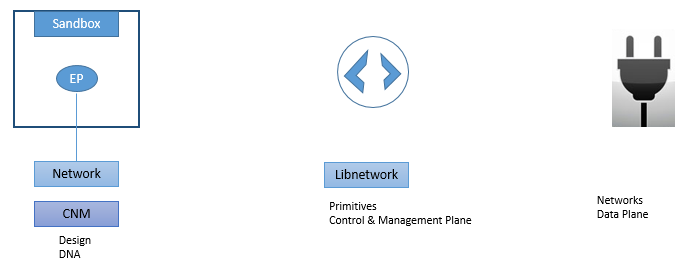

There are three components in Docker Networking:

- The Container Network Model (CNM)

- Libnetwork

- Drivers

CNM: CNM defines and outline fundamental building blocks of Docker Network.

Libnetwork: It is the real implementation of CNM and is used by Docker, Just like TCP/IP is implementation of OSI layer.

Drivers: With the help of drivers, various Network topologies can be implemented like VXLAN-based overlay network.

Below figure explains what we have studied above.

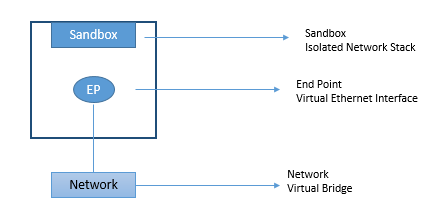

CNM (Container Network Model):

CNM is the framework which defines how networking design should be from Docker Network. CNM model is categorized in to three building blocks.

- Sandboxes

- Endpoints

- Networks

Sandbox: It is an isolated network stack, which includes Ethernet interface, DNS, Ports, routing tables.

Endpoints: It is a virtual interface, which is used to provide network connection to make communication successful.

Networks: It is the 802.1d software network bridge or software based switch, on which various endpoints connects to communicate to each other.

Below figure describes the CNM model.

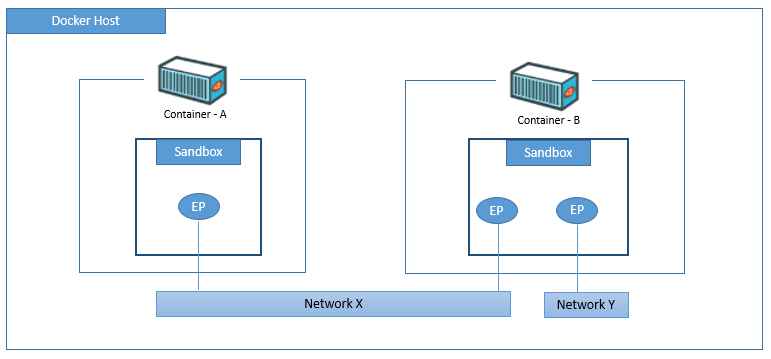

CNM model is used by Docker to provide network connectivity to containers. In which Sandboxes are placed inside containers and Endpoints in container is used to connect to Software based switch to provide connectivity.

Below figure show that there are two containers and Endpoints of Container A and B which is connected to Network X will be able to communicate to each other whereas Endpoints in Containers B who are on different Network will not be able to communicate each other , as they are in different network and they requires Routers to communicate.

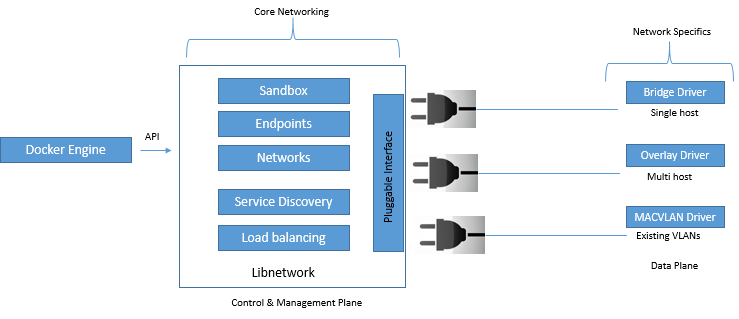

Libnetwork:

Libnetwork is the real implementation of CNM, Libnetwork is open source, and can be used on different platforms like Linux and windows.

Libnetwork contains codes of core docker networking. Due to which it provides following functions:

- Service discovery

- Ingress-based container load balancing

- Control plane & Management plane function.

Drivers:

Drivers are responsible for data plane of containers. Below figure describes that how drivers are used to handle data plane by using control plane and Management plane.

By default Docker ships with some in-build drivers called as local drivers. If we talk on Linux Platform they are bridge, overlay, and macvlan. On windows they are nat, overlay, transparent, l2bridge.

Single Host Bridge Network:

In single host bridge network, is the one which exists on single Docker host and can only connect containers that are on that Host only via 802.1d Software Bridge.

Docker create this single host bridge via bridge driver on Linux platform and on Window platform it is created by using nat driver.

LEAVE A COMMENT

Please login here to comment.